AI-Powered Crypto Scams: Real Examples, and How to Protect Yourself in 2026

Using AI is a marker of progress. We all love it but AI also is an advanced tool to steal money and attack your crypto wallet. The more artificial intelligence evolves, the smarter, faster, and more difficult to detect with the naked eye scams become.

According to the Chainalysis 2026 Crypto Crime Report, an estimated $17 billion was stolen in crypto scams in 2025 alone. The average payment sent to scammers grew 253% year-over-year, from $782 to $2,764. And AI-enabled scams are now 4.5 times more profitable than traditional ones.

The reason is simple: artificial intelligence has given fraudsters a superpower. They can now build convincing fake identities, run thousands of romantic conversations simultaneously, clone executives' voices, and generate perfect phishing emails — at almost zero cost. Even OpenAI CEO Sam Altman has warned publicly that AI's ability to mimic humans has "fully defeated most authentication" methods and could trigger a "significant impending fraud crisis."

Key Takeaways You Should Know About AI Scams

-

AI has made crypto scams 4.5 times more profitable — and the gap is widening. Scammers with access to deepfake tools, AI chatbots, and phishing-as-a-service platforms extract an average of $3.2 million per operation, compared to $719,000 for those running scams without AI.

-

Scammers are spending more time per victim and using AI to build deeper trust before asking for money.

-

Deepfake technology has reached the point where a real-time video of a colleague, a romantic partner, or a celebrity can be fabricated in minutes for just a few dollars.

-

Impersonation scams grew 1,400% in 2025 — faster than any other category. Government agencies, toll collection systems, crypto exchanges, and banks are all being convincingly faked.

-

Bad grammar is no longer a warning sign. Black-market tools like WormGPT and FraudGPT produce fluent, personalized messages in any language. The envelope looks legitimate. The logo looks right. The domain is almost correct. Scrutinizing spelling mistakes will no longer protect you.

How Scammers Are Using AI — and Why It's So Effective

Deepfakes and Face-Swap Technology

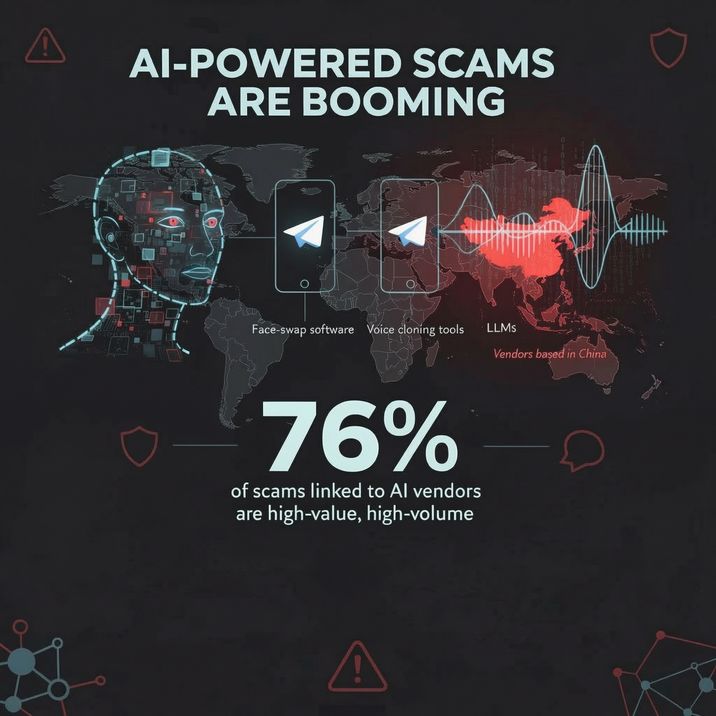

The most alarming development in modern crypto fraud is the mass availability of deepfake technology. Scammers now routinely buy face-swap software, voice cloning tools, and large language models (LLMs) from vendors operating on Telegram — many of them based in China. Chainalysis found that 76% of scams with traceable links to these AI vendors fell into the "high-value, high-volume" category — meaning AI doesn't just make scams more convincing, it makes them far more efficient and scalable.

A deepfake video of a celebrity, an executive, or even a romantic partner can be produced for just a few dollars and takes minutes to generate. The results are often indistinguishable from reality, especially to viewers who are emotionally invested in what they're seeing.

Phishing-as-a-Service

Sophisticated scamming infrastructure is now sold as a subscription product. The "Lighthouse" platform — exposed in Google's 2025 lawsuit against the Smishing Triad criminal group — offered complete phishing kits for as little as $20 to $50. These kits included hundreds of templates for fake government and corporate websites, domain setup tools, and features designed to evade detection.

According to Chainalysis, scams that use phishing kits of this type are 688 times more effective in dollar terms than ordinary scams. The Lighthouse platform alone received over 7,000 deposits and amassed more than $1.5 million in cryptocurrency over three years.

Black-Market AI Tools: WormGPT and FraudGPT

Since mainstream AI tools like ChatGPT have built-in safety filters, criminal developers have created their own unrestricted alternatives. WormGPT — originally built on the open-source GPT-J model — was designed explicitly for criminal use: writing flawless phishing emails, generating malicious code, and automating business email compromise attacks. FraudGPT, sold for up to $200 per month on dark web forums and Telegram, is tailored specifically for financial crimes — creating fake identities, spoofing banking and crypto pages, and generating scripts for crypto scams.

By 2024, cybersecurity firm KnowBe4 found that nearly 74% of phishing emails they analyzed showed signs of AI involvement. The era of spotting scams by their bad grammar is over.

Reaching More Victims at Once

The most dangerous aspect of AI-enhanced fraud is its scale. Chainalysis data shows that AI-assisted scam operations process 9 times more transactions per day than non-AI scams. A single operator — or a small team — can manage hundreds of romantic relationships, phishing campaigns, or fake customer service conversations simultaneously. AI handles the volume; the human only steps in to close the deal.

List of Main Types of AI-Powered Crypto Scams

-

Pig Butchering Scams (Sha Zhu Pan): The scammer builds a genuine-feeling relationship over weeks or even months. Once trust is established, the scammer introduces a "secret" crypto investment platform. Initial deposits appear to earn massive returns. The victim invests more. Then more. When they eventually try to withdraw, they're told they owe taxes, fees, or penalties to unlock their funds. They keep paying. Then the scammer disappears.

-

Government Impersonation Scams: Attackers pose as state services such as tax agencies, mail support, and similar institutions. Using phishing-as-a-service platforms and deepfakes of fake websites, they send messages asking you to pay government fees, postage, and other charges.

-

Exchange and Platform Impersonation: This follows the same approach as government scams, but scammers present themselves as crypto exchange customer service representatives. One notable example is the Coinbase case, in which the Brooklyn District Attorney's Office indicted Ronald Spektor, a 23-year-old Brooklyn resident, for stealing nearly $16 million.

-

Deepfake Celebrity Endorsement Scams: AI-generated videos of Elon Musk, Warren Buffett, and other public figures promoting fake crypto investment platforms have flooded YouTube, TikTok, and Facebook.

-

Malware-Laced Scams: Chainalysis has documented a growing trend of Chinese scam networks embedding Stealer Malware and Remote Access Trojans (RATs) directly into scam interactions — documents, links, or apps sent to victims. A single click can give scammers complete access to a device, enabling them to drain crypto wallets entirely without ever needing to convince the victim of anything.

Real Stories: How Ordinary Americans Lost Everything to AI Crypto Scams

These are not abstract statistics. Behind every number is a person whose life was upended. Here are six real, documented cases.

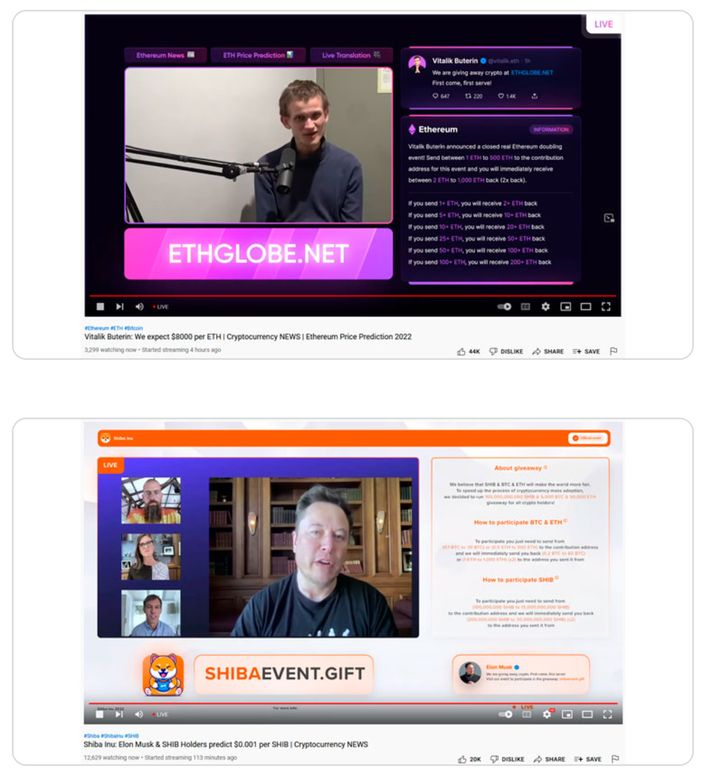

Fake Vitalik Buterin and Elon Musk — $1.6 million in 281 transactions

Global, 2022

Between February 16 and 18, 2022, the Group-IB Digital Risk Protection team identified 36 fraudulent YouTube livestreams promoting supposedly instant and substantial returns on cryptocurrency investments.

The threat actors repurposed AI footage of well-known entrepreneurs and crypto advocates — including Elon Musk, Brad Garlinghouse, Michael J. Saylor, Changpeng Zhao, Cathie Wood, and others — taken from legitimate events to stage deceptive broadcasts. On average, these streams attracted between 3,000 and 18,000 viewers. One fake livestream featuring Vitalik Buterin amassed more than 165,000 viewers, who were promised that their crypto holdings would be doubled in real time.

The YouTube channels hosting these fraudulent broadcasts typically bore names associated with the featured speaker in the manipulated video. It is believed that all of these channels were either compromised or acquired through underground marketplaces.

According to Group-IB’s estimates, the wallets controlled by the scammers received more than $1.6 million in 281 transactions.

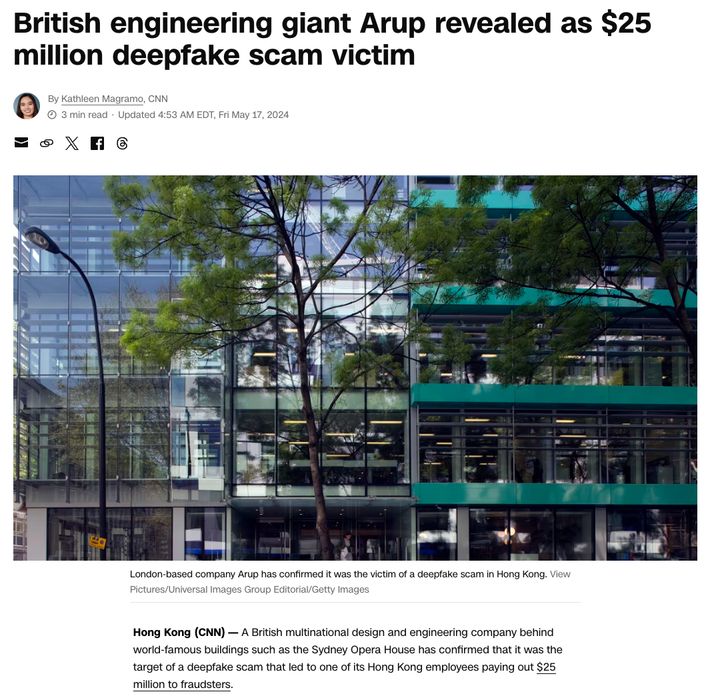

Arup Engineering — $25 Million Transferred to Deepfake Executives

Hong Kong, January 2024

A finance employee at Arup, one of the world's leading engineering firms, received an email purportedly from the company's UK-based CFO requesting a "secret" transaction. Initially suspicious, the employee agreed to join a video conference call to verify.

On the call: the CFO, several familiar colleagues, and what appeared to be a standard executive meeting. Every single person on screen was a deepfake generated from publicly available video footage of real Arup employees. Convinced he was following direct orders from his leadership, he made 15 separate transfers totaling approximately $25.6 million across five Hong Kong bank accounts.

The fraud was only discovered when he followed up with Arup's actual headquarters. No systems were breached, no data was stolen. It was, as Arup's CIO Rob Greig described it, pure "technology-enhanced social engineering." The case is now studied globally as the defining template for enterprise deepfake attacks.

Red Flags of an AI-Powered Crypto Scam

These are the red flags to know — and to share with people you care about.

🚩 Unsolicited contact that quickly becomes personal.

🚩 A video call that feels slightly off — unnaturally smooth skin, odd lighting, or very slight audio delays.

🚩 Early introduction of a crypto investment opportunity.

🚩 Initial profits that seem too easy.

🚩 Pressure to use a crypto ATM. No legitimate government agency, bank, or tech company will ever ask you to withdraw cash and deposit it into a Bitcoin ATM. This is exclusively a scam tactic.

🚩 Customer service calling you about account security.

🚩 Requests for secrecy. Scammers routinely tell victims to keep their relationship or investment secret — from family, friends, and especially financial advisors. Legitimate opportunities do not require secrecy.

🚩 A celebrity video that seems too convenient.

How to Protect Yourself from AI-Powered Crypto Scams

General Rules

Never send crypto to "verify," "secure," or "unlock" an account — under any circumstances. No exchange or financial institution will ever require this. If you receive a call, email, or message about account problems, end the conversation and contact the company directly through its official website, not through any link or number provided in the message.

Treat all unsolicited investment advice from people you've met online as suspicious, regardless of how well you feel you know them. The pig butchering playbook depends on victims trusting the person making the recommendation more than they trust their own skepticism.

Read more: False Security Checklist for 2026 in Crypto Wallets: The Hidden Risk of Doing “Everything Right”

Deepfake-Specific Defenses

A reliable method to expose a deepfake video call in real time: ask the person to turn their head to the side or hold a random object in front of their face. Most deepfake systems struggle with profile angles and unexpected objects, and the image will distort or glitch. Another option is to establish a family "safe word" that can be used in calls or messages to verify that the person is genuinely who they say they are — this is especially useful to protect elderly family members.

Voice recognition is no longer a reliable authentication method. Sam Altman's warning about AI defeating authentication is not hypothetical — voice cloning tools that work from a 30-second sample are freely available.

Technical Protections

Use a hardware wallet for any significant crypto holdings — this keeps your assets offline and inaccessible to malware. Enable two-factor authentication (2FA) on all exchange accounts, using an authenticator app rather than SMS. Never click links in unexpected text messages, even if they appear to be from a known company. Check website URLs character by character — scammers regularly register domains like "coinbsae.com" or "e-zpassny-secure.com" that look legitimate at a glance.

Read more: The Top 3 Crypto Security Best Practices Every Investor Needs

Password managers offer a useful secondary defense: they only auto-fill credentials on the actual website they were saved for. If you land on a fake phishing page, your password manager won't fill in your information — which is often the first sign something is wrong.

Frequently Asked Questions

How do deepfake crypto scams work?

Scammers use AI tools to create fake but realistic videos, voices, or entire identities. They clone a celebrity’s likeness to promote a fake investment platform in a “live” YouTube or Facebook stream, fabricate a romantic partner who conducts months of conversation via AI chatbot and deepfake video calls, or impersonate a victim’s own executives during a business video conference.

Can I get my money back if I was scammed in crypto?

In most cases, unfortunately, no. Cryptocurrency transactions are irreversible by design — once funds leave your wallet, they cannot be recalled unilaterally. However, there are a few scenarios where partial recovery is possible: if you report quickly, your exchange may be able to flag the destination wallet and block further movement; law enforcement operations have in some cases seized and returned stolen funds (the FBI seized $8.2 million connected to pig butchering in 2025); and if you paid via bank wire or credit card rather than direct crypto transfer, your bank may have more options.

How to identify AI-generated phishing emails targeting crypto users?

The old advice — look for bad grammar and strange phrasing — no longer works. AI-generated phishing emails are now grammatically flawless and highly personalized. Instead, look for these signals: unexpected urgency (“your account will be suspended in 24 hours”), requests for action that feel slightly off from normal workflows, email addresses that use lookalike domains (e.g. “coinbsae.com” or “support@coinbase-secure.net”), and any link that leads somewhere other than the official domain when you hover over it.